Agentic AI Is 6–7x’ing Underwriting Capacity

Welcome to DX Brief - Insurance, where every week, we interview practitioners and distill industry podcasts and conferences into what you need to know

In today's issue:

Scaling premium binding capacity 6-7x: How agentic AI is reshaping underwriting capacity

“AI-native” is a full business model, not just a technology choice: here’s how it works at Gyde, the first AI-native insurance, wealth, and health brokerage

Stop optimizing old processes – insurers who can't reimagine their entire business will lose to those who can

1. Scaling premium binding 6-7x: How agentic AI is reshaping underwriting capacity

Building Tomorrow’s Insurer podcast, The 27-Hour Coding Sprint: How Agentic AI is Transforming Insurance Operations (Feb 9, 2026)

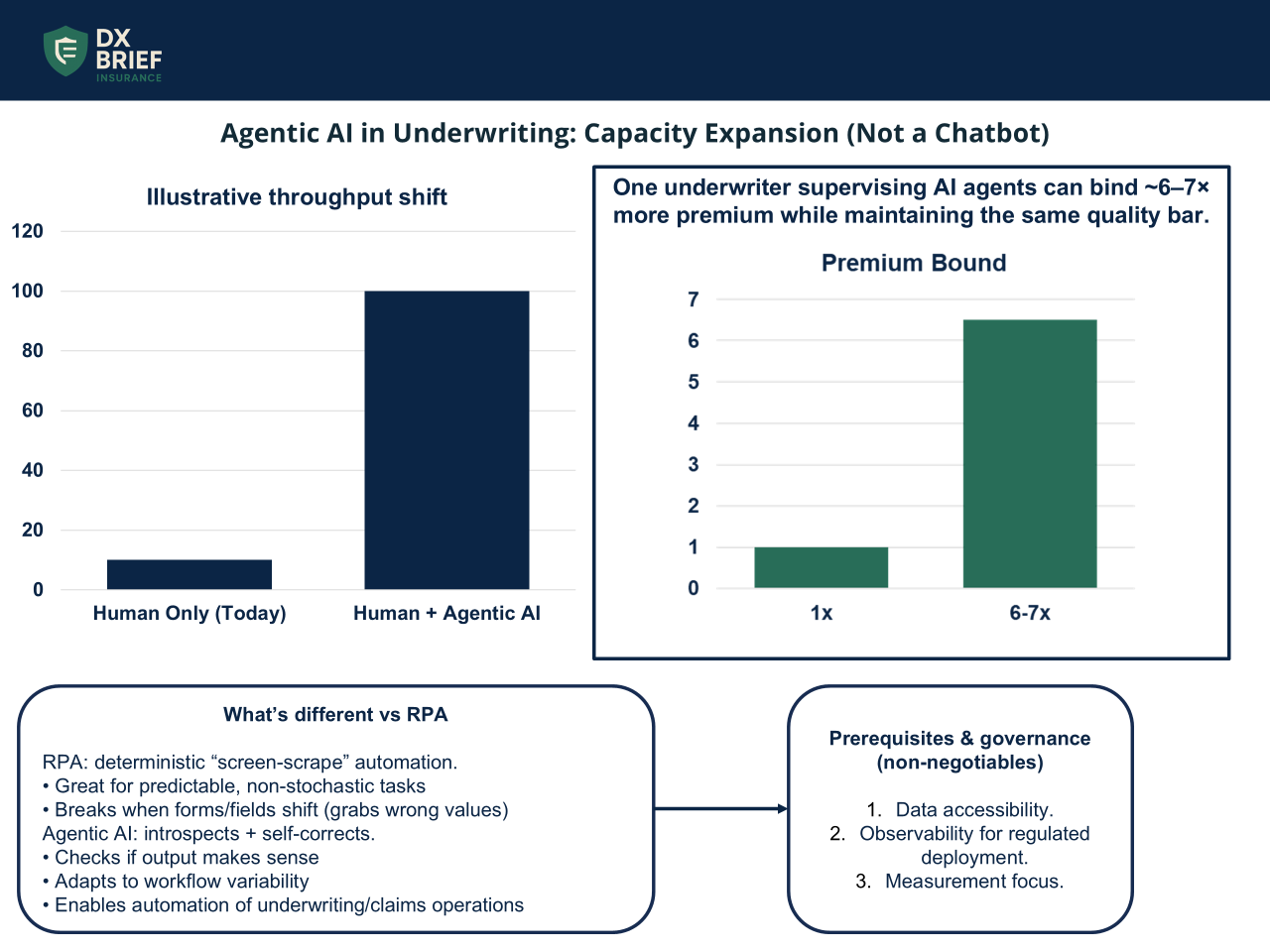

Background: Your underwriters can do 3 submissions a day. What if they could supervise agents doing 100? Alex Taylor, who runs emerging tech at QBE Ventures, has watched agentic coding systems run for 27 consecutive hours – writing, testing, fixing, and proving code without stopping. The same pattern is now hitting insurance operations. One underwriter managing a team of AI agents can bind 6-7x more premium while maintaining the same quality bar. Here's what's actually working and what failed.

TLDR:

Agentic AI differs from RPA because it introspects and self-corrects. When something shifts, it adapts rather than blindly grabbing the wrong value. That flexibility is why insurers are partnering with startups that didn't exist 18 months ago.

The chatbot-in-the-corner approach failed at 99% of implementations. The winning pattern is AI as a colleague that surfaces decisions in your existing tools with full reasoning visible – bind, decline, or refer in 30 seconds instead of 2 hours.

Data accessibility is the single most important prerequisite. If your data lives in arcane systems only one person understands, you can’t take advantage of agentic AI. Fix that first.

Agentic AI is not RPA with better marketing; it's a fundamentally different capability. RPA excels at predictable, non-stochastic tasks, such as connecting to a mainframe, grabbing the value at row 30 column 40, and returning it via API. That's incredible, and it works. But if someone changes a form and that field shifts down one row, RPA blindly grabs the wrong value.

Agentic AI introspects. It asks itself whether it grabbed the right thing, spots when something's wrong, and tries a different approach until it succeeds. That's why humans have been doing certain tasks – that nuance of recognizing when something is off. These systems can now replicate it.

The practical implication: workflows requiring flexibility, interpretation, and adaptation – the exact profile of underwriting and claims operations – are now candidates for agentic automation. Not because the AI is replacing complex reasoning, but because it can now handle the variability that made automation impossible before.

The “chatbot era” was a misfire… the “workflow era” is what works. Adding a chat button to the bottom right of your platform delivered near-zero value. Analytics showed 99% of users ignored these features entirely. Why type a question in plain English when you can press the button that's always been there? The value calculation didn't work.

What does work is integrating agents into existing operational flows. Think of it as an employee who lives in Teams or Slack: it looks at a submission, makes a decision, shows its reasoning, recommends an action – bind, decline, refer – and delivers that in 30 seconds rather than 2 hours.

Your experienced underwriter spends their time reviewing agent decisions rather than doing extraction work. The result isn't headcount reduction; it's genuine capacity expansion. Same quality, 6-7x more premium bound.

Observability is the non-negotiable for regulated deployment. Microsoft's Azure AI partnership with Allstate highlights a crucial shift. Major insurers don't just want answers. They want evidence that answers are correct and systems to prove they're working.

When a regulator asks how you made a claims decision, you need to show the reasoning chain. Agentic systems can do this natively: here's what I considered, here's my decision, here's why. That observability is probably the most important component of safe AI use in insurance. Without it, you're trusting the roll of a dice.

Snorkel has published leaderboards testing AI models against 4,500 underwriting questions with verified answers from experienced underwriters. Claude Opus currently leads. As models perform above human baselines, it forces uncomfortable questions about whether purely human processes remain appropriate, or whether hybrid models with appropriate governance are actually superior.

What to do about this:

→ Audit your data accessibility this month. Ask: can a new technology partner access the data they need without navigating arcane legacy systems? If the answer involves "the one person who knows where that data lives," you have a blocker. Fix it before any AI initiative.

→ Kill your chatbot pilots and redesign around workflow integration. Stop testing AI as a button users can click. Test it as a colleague that surfaces decisions in the tools your team already uses, with full reasoning visible. Measure time-to-decision and decision quality, not feature adoption.

→ Build your observability layer before scaling. Your governance regime must include the ability to explain any AI decision to regulators. Define what human oversight looks like – maybe a quorum reviews 10% of all decisions – and document it now, not during the first regulatory inquiry.

2. “AI-native” is a full business model, not just a technology choice: here’s how it works at Gyde, the first AI-native insurance, wealth, and health brokerage

Self-Funded podcast, The "Next Generation" Insurance Agency: What AI-Native Means (Feb 3, 2026)

Background: Will Johnson spent a decade at Oscar Health building consumer-centric insurance technology before founding Gyde, which he calls "the first AI-native insurance, wealth, and health brokerage." AI-native means that the core of the business is an AI platform. Gyde isn't selling software to agencies – it's acquiring them and deploying AI to 3-5x their client capacity. Here's how the model works and why the timing is finally right.

TLDR:

AI-native means building AI-first, not bolting AI onto existing processes. Gyde's technology team comes from Oscar and Stripe, building voice agents, renewal automation, and AI co-pilots as core infrastructure rather than add-on features.

The goal is "capacity amplification" – enabling the same team to service 3-5x more clients – not workforce replacement. When someone wants a human, they get connected immediately.

Three AI deployment phases: (1) automated renewals to eliminate Q4 chaos, (2) AI-powered client services for high-frequency/low-complexity tasks, (3) co-pilot infrastructure for cross-sell and product recommendations.

AI-native is an economic model, not just a technology choice. Johnson spent years thinking about how to transform insurance distribution through technology. The barrier wasn't technical but economic alignment.

"With just a software platform, I didn't know if you could really drive the change you'd like on a data intake and utilization basis." Agency information lived on Post-it notes, Excel spreadsheets, PDFs, and individual cell phones. No software platform could force behavior change to capture that data.

The solution: acquisition partnerships that create shared economic incentives. Gyde acquires agencies, keeps the owner and team in place, and deploys its AI platform.

The owner "puts on the Gyde jersey" while preserving their legacy and client relationships. This isn't traditional M&A seeking cost synergies. It's economic alignment that enables technology transformation.

Think capacity amplifiers, not replacement. Johnson addresses the "existential dread about AI" directly: "We're talking about capacity amplifiers, not any kind of replacement."

The platform aims to enable a core team to service 2-3x the number of clients they previously served. The human connection isn't eliminated – it's reserved for high-value interactions while AI handles high-frequency, low-complexity tasks.

The critical design principle: "As soon as they say they want to talk to a human, they're connected to a human."

This isn't the frustrating IVR maze of traditional call centers. AI handles what it can handle well, and immediately escalates what it can't. The result is better client experience and dramatically improved unit economics.

The three-phase AI deployment roadmap. Gyde's platform addresses three specific agency pain points in sequence.

Automated renewals. "Q4 is a total mess" for most agencies, with eight-page Word documents governing renewal workflows. AI takes on that administrative burden, freeing capacity for growth.

AI-powered client services infrastructure to answer questions and handle routine tasks, allowing account managers to focus on deep human-centric conversations.

Co-pilot infrastructure that recommends the right product bundles based on renewal applications and service interactions, transforming single-line agencies into multi-line advisors.

The sequencing matters. You can't cross-sell effectively if you're drowning in renewal administration. Each phase unlocks the next.

What to do about this:

→ Define what AI-native means for your organization. Is AI a bolt-on to existing processes, or is it core infrastructure that reshapes how work gets done?

→ Calculate your capacity amplification potential. If your team could service 3x the clients with AI handling routine tasks, what does that mean for growth economics?

→ Evaluate partnership models for technology deployment. If economic alignment is necessary to drive behavior change, consider whether traditional software sales can achieve your transformation goals, or whether deeper partnership structures are required.

3. Stop optimizing old processes – insurers who can't reimagine their entire business will lose to those who can

The Future of Insurance podcast: Industry Leaders - 2026 Intelligent Insurance Trends You Need to Stay Competitive and Accelerate Growth (Feb 3, 2026)

Background: BCG reports that insurance has adopted AI faster than any industry except tech. Yet most carriers haven't scaled their pilots because they're applying new technology to old processes. Carriers who use AI just to cut costs will struggle to grow their business by 2027.

TLDR:

The insurance industry has spent 40+ years incrementally optimizing processes that shouldn't exist in the first place. AI without reimagining processes just accelerates inefficiency at scale.

Economic impact sits in loss ratios, risk selection, and product optimization. Not in the G&A expense lines where most digital initiatives focus. The real opportunity is billions on the balance sheet, not hundreds of thousands in operational savings.

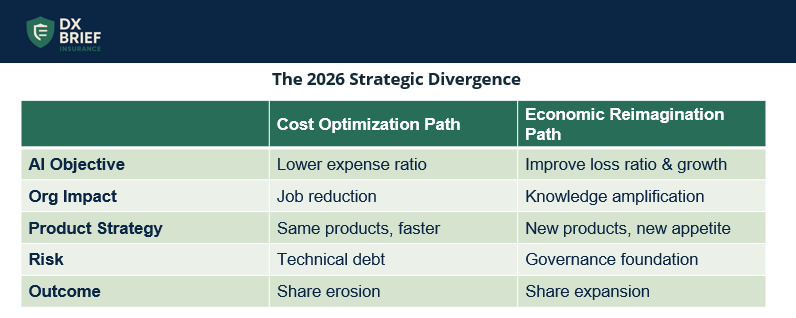

By the end of 2026, carriers who used AI to eliminate jobs will be scrambling to figure out how to grow again, while those who used AI to capture institutional knowledge and enable new products will be pulling ahead.

You can’t shrink to grow. "The carriers who are saying 'I want to leverage AI just to lower my cost' are going to miss the mark. They're going to start seeing their share erode, and not just their share relative to competitors, but their share across the value web."

The instinct is understandable. AI promises efficiency. Boards want ROI. The path of least resistance is automating existing claims processes and underwriting workflows. But that approach has a ceiling, and most carriers are already hitting it.

"It's time to actually start looking at growth." The winning carriers are shifting AI investment from internal operations and claims toward appetite expansion, new services, and selling more accurately on existing products.

Peter Drucker's observation applies perfectly: stop making more efficient that which should not be done at all. "We fixate so much on expense ratio and operational efficiency. If I had a dime for every startup that says 'we're going to save so much money if they just implement this bolt-on piece of technology.'"

The problem? "Economics that move the needle don't typically sit in the G&A operational expense line items. They sit in much bigger things – frequency and severity, replacement costs, how the price of supply and lumber is exponentially going up after a major event."

When you reimagine around the economics that actually matter – loss ratios, risk selection, pricing optimization – technology becomes an enabler of fundamentally different products and customer relationships, not a cost reduction tool.

Technical debt from ungoverned AI is accumulating faster than anyone admits. The Bill Gates quote from 1995 applies: "We always tend to overestimate the impact of technology in the short term and underestimate it in the long."

The carriers rushing into AI without governance foundations will spend the next several years cleaning up, while competitors who took time to structure governance properly will be deploying at scale.

"Insurance won't be disrupted by new risk. It will be disrupted by how systems decide. The biggest failures won't come from underwriting. They'll come from opaque automation and AI acting faster than governance can keep up."

What to do about this:

→ Audit where your AI investment is concentrated. If it's primarily in claims processing and internal operations, you're optimizing for cost reduction with a ceiling. Shift budget toward appetite expansion, new product development, and growth enablement.

→ Run the Peter Drucker test on your current digital initiatives. For each AI pilot, ask: "Should this process exist at all in its current form?" If you're automating something that shouldn't be done in the first place, stop and redesign the process before applying technology.

→ Quantify the economic opportunity beyond G&A. Calculate the P&L impact of a 1% improvement in loss ratio versus a 10% reduction in operational expenses. This exercise typically reveals where the real value sits, and it's rarely in the expense lines.

Peter Drucker's observation applies perfectly: stop making more efficient that which should not be done at all. "We fixate so much on expense ratio and operational efficiency. If I had a dime for every startup that says 'we're going to save so much money if they just implement this bolt-on piece of technology.'"

The problem? "Economics that move the needle don't typically sit in the G&A operational expense line items. They sit in much bigger things – frequency and severity, replacement costs, how the price of supply and lumber is exponentially going up after a major event."

When you reimagine around the economics that actually matter – loss ratios, risk selection, pricing optimization – technology becomes an enabler of fundamentally different products and customer relationships, not a cost reduction tool.

Technical debt from ungoverned AI is accumulating faster than anyone admits. The Bill Gates quote from 1995 applies: "We always tend to overestimate the impact of technology in the short term and underestimate it in the long."

The carriers rushing into AI without governance foundations will spend the next several years cleaning up, while competitors who took time to structure governance properly will be deploying at scale.

"Insurance won't be disrupted by new risk. It will be disrupted by how systems decide. The biggest failures won't come from underwriting. They'll come from opaque automation and AI acting faster than governance can keep up."

What to do about this:

→ Audit where your AI investment is concentrated. If it's primarily in claims processing and internal operations, you're optimizing for cost reduction with a ceiling. Shift budget toward appetite expansion, new product development, and growth enablement.

→ Run the Peter Drucker test on your current digital initiatives. For each AI pilot, ask: "Should this process exist at all in its current form?" If you're automating something that shouldn't be done in the first place, stop and redesign the process before applying technology.

→ Quantify the economic opportunity beyond G&A. Calculate the P&L impact of a 1% improvement in loss ratio versus a 10% reduction in operational expenses. This exercise typically reveals where the real value sits, and it's rarely in the expense lines.

Disclaimer

This newsletter is for informational purposes only and summarizes public sources and podcast discussions at a high level. It is not legal, financial, tax, security, or implementation advice, and it does not endorse any product, vendor, or approach. Insurance environments, laws, and technologies change quickly; details may be incomplete or out of date. Always validate requirements, security, data protection, regulatory compliance, and risk implications for your organization, and consult qualified advisors before making decisions or changes. All trademarks and brands are the property of their respective owners.