AI Is Compressing Prices and Exposing Workforce Gaps

Welcome to DX Brief - Insurance, where every week, we interview practitioners and distill industry podcasts and conferences into what you need to know.

In today's issue:

InsurTech founder shares lessons about price compression from AI-native competitors, and why the workforce retraining challenge will define winners and losers

Your AI strategy should chase customer needs (not cost-cutting) and work backwards

80% of your employees are already using AI – what to do before it backfires

1. InsurTech founder shares lessons about price compression from AI-native competitors, and why the workforce retraining challenge will define winners and losers

Business Technology Perspectives podcast: James Benham on Why Insurance Is One of the Most Interesting Tech Problems (Jan 27, 2026)

Background: James Benham has spent 25 years building enterprise software for insurance. His company Terra competes against 32 core systems in workers' compensation. His insight? Insurance appears boring on the outside but is anything but boring on the inside, because every activity on the planet requires insurance to happen. Twenty different policies protect a single football match. And now, AI-native competitors are about to compress pricing industry-wide while legacy insurers struggle with a strange paradox: surplus workers in one category, unfilled jobs in another, and no bridge between them.

TLDR:

Pace of execution now defines survival. AI has compressed development timelines so dramatically that go-to-market speed matters more than technical moats.

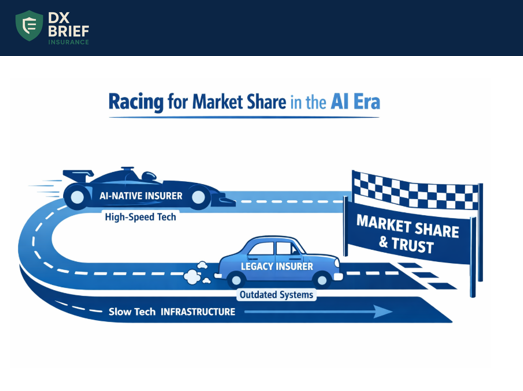

The coming price compression is real: AI-efficient insurers will cut rates to chase market share, and legacy players without "fat left to cut" will struggle to compete.

The workforce challenge isn't layoffs; it's retraining surplus manual workers into high-demand roles, and most insurers don't have the training infrastructure to make that transition.

Your technical moat is eroding. Go-to-market speed is the new moat. The cost of developing applications is dropping so fast that competitors can replicate your technology quickly.

In the past, you could build extensive proprietary technology and create a moat around your product. Now, with AI accelerating development, obsolescence rates are compressing. If you don't get to market fast, you're done.

The race isn't about who builds the best tech. It's about who commercializes fastest, gains market share, and builds trust. Terra competes against 32 core systems. The technology is increasingly similar; the differentiation is execution.

Price compression is coming, and some carriers won't survive it. As AI drives vast automation, the most efficient insurers will cut prices to chase market share. They'll have the operational efficiency to absorb lower margins while growing volume.

Legacy carriers with slow pace of change will face a brutal choice: match prices they can't sustain or lose market share they can't afford to lose. The insurers that haven't been investing in efficiency will find they have "no fat left to cut" when the price war begins.

The workforce paradox is your biggest operational risk. Benham sees a pattern across his insurance clients: surplus staff in manual, repetitive roles and unfilled positions in high-demand technical categories, with no training program to move people from one to the other.

The companies that solve this will have a workforce that evolves with automation. The companies that don't will face the impossible choice of layoffs in one department while recruiting externally for another. The retraining infrastructure doesn't exist at most carriers, and building it takes years.

What to do about this:

→ Benchmark your product development cycle time. Measure how long it takes from concept to production deployment for a new capability. If it's longer than 6 months, you're vulnerable to faster competitors. Set a target to cut that time in half within 18 months.

→ Model a 15% rate reduction scenario. Ask your actuaries and finance team: what would happen if our top three competitors cut rates by 15%? Identify which books of business would be most vulnerable and where you'd need operational efficiency gains to respond.

→ Audit your workforce flexibility. Catalog how many employees are in roles that AI will automate within 3 years. Then catalog your unfilled technical positions. If the gap is large and you don't have a retraining program, start building one now, or plan for the organizational trauma of simultaneous layoffs and external hiring.

2. Your AI strategy should chase customer needs (not cost-cutting) and work backwards

FE Insurance Summit session: AI and Machine Learning in Insurance Transformation (Jan 27, 2025)

Background: Most insurers start their AI journey with chatbots and productivity tools – reducing FTEs and automating repetitive tasks. But the real ROI comes from growth use cases, not headcount reduction. One insurer achieved 7-minute health claim pre-authorizations. Another hit double-digit conversion rates on outbound calls by using AI to predict exactly when, how, and what to offer each customer. Here's the framework that separates AI experiments from AI that transforms your P&L.

TLDR:

Productivity use cases (chatbots, document processing) won't prove ROI. Pivot to growth use cases in underwriting and claims that do things humans physically can’t do at scale.

Build your data architecture before your AI architecture. Andrej Karpathy's 90/10 rule applies: superior models with poor data lose to average algorithms with excellent data.

Form cross-functional "pit crew" teams that blend data scientists, ML engineers, product managers, and business analysts. Siloed AI projects fail.

Think C2B, not B2C, and watch your conversion rates double. One panelist shared results from a financial services outbound calling operation that achieved double-digit conversion rates – in an industry where cold calling typically yields 0.5-3%.

The difference wasn't better scripts or more calls. It was AI predicting the exact moment a customer was available, their preferred channel (call vs. SMS vs. notification), the right product for their financial position, and the optimal contact timing.

The result: calls felt personal, not cold. Customers responded with phrases like "this company knows me." The call times dropped because conversations became precise. That's C2B thinking: starting with what the customer needs and working backward.

Data architecture beats AI architecture every time. Dr. Anand Mahalingam from Digit Insurance cited Andrej Karpathy's famous observation: models account for only 10% of success; data quality drives the other 90%.

You can download open-source models from Hugging Face tomorrow, and so can your competitors. What you cannot download is your proprietary data. The insurers winning the AI race are building data infrastructure first, then layering AI on top. They're investing in ML ops from day one so experiments can be repeated and scaled.

Flexibility is your moat – not any single model. AI is evolving so fast that knowledge from a month ago may already be obsolete. One panelist recommended building infrastructure that can quickly swap models rather than betting everything on one vendor.

Don't spend heavily on dependencies with a particular model; spend on flexible infrastructure that can host open-source models in-house and pivot quickly when the next breakthrough arrives.

What to do about this:

→ Audit your AI portfolio for growth vs. productivity split. Categorize every current AI initiative. If 80% are productivity plays (chatbots, document extraction), reallocate resources toward one underwriting or claims use case that does something humans cannot do, like running 50,000 Monte Carlo simulations to quantify cyber risk exposure.

→ Run a data architecture assessment before your next AI investment. Commission a 30-day audit of your data estate. Map every data silo, score data quality by domain, and identify the three biggest gaps between what your AI needs and what your data can deliver.

→ Build model flexibility into your architecture. Require your technology team to demonstrate they can swap underlying AI models within 30 days. If you're locked into a single vendor or model, you're already falling behind.

3. 80% of your employees are already using AI – what to do before it backfires

Privately Intelligent podcast: Privately Intelligent Ep11: Why Secure, Private AI Is the Future of Insurance Operations, with Michelle Berndt, President & Partner at Insure TechHub (Jan 27, 2025)

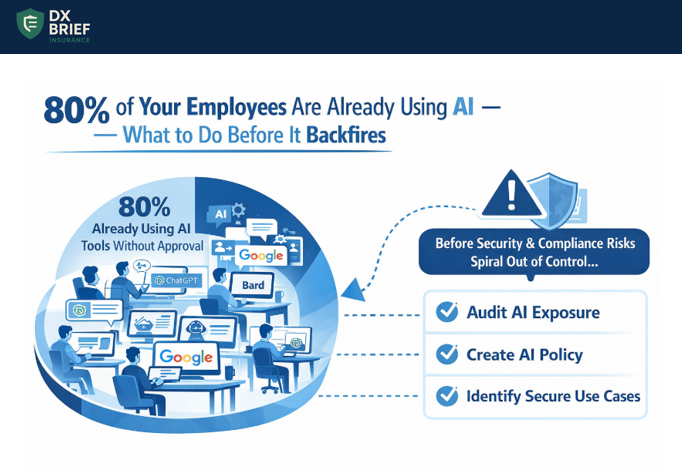

Background: 80% of your employees are already using AI tools privately; often without leadership knowing. Michelle Berndt, who spent 8 years in insurance operations and led national training programs, explains why this isn't a technology problem – it's a governance crisis waiting to happen. She has seen agencies achieve a 40% lift in workload capacity by switching to secure AI platforms. The catch? Most agencies are still operating without any AI policy at all.

TLDR:

80% of employees are using AI tools without approval, creating compliance and security risks.

The "innovation paradox" is real: companies tell employees to be innovative but then fail to provide secure tools or training, forcing staff to find workarounds.

Agencies seeing 40% productivity gains list what requires human touch on one side, what can be standardized on the other, then implement secure AI for the standardized tasks.

Your employees aren't the problem. Your lack of AI governance is. When employees hear "be innovative," they reach for what they know: ChatGPT, Google, whatever's fastest. Michelle has seen this pattern across healthcare and insurance: "I don't think employees ever do it to harm the company. They do it because it's the only tools they know."

The issue isn't employee behavior. It's that leaders are asking for innovation while providing no approved pathways to achieve it.

Consider the compliance exposure: insurance calls are recorded specifically because one wrong detail about pre-existing condition waiting periods or maternity coverage can jeopardize an entire policy.

Now imagine agents pulling answers from unvetted AI sources during high-volume enrollment periods. As Michelle puts it: "The member remembers everything you say. If you told them maternity was covered when in reality it wasn't, that is coming back on the company and the insurance agent."

The training gap is bigger than you think. Most agencies have a "folder" or "training library" somewhere. But Michelle asks the harder question: "Can your employees interact with it?"

Traditional documentation is static. When new hires are halfway through a presentation and have questions, they can't pause and ask. They either interrupt someone, guess, or – increasingly – ask ChatGPT.

The opportunity is to create an "interactive knowledge base" where employees can query company-specific documents in real-time, getting answers tailored to your policies, products, and procedures.

The shift from static documentation to conversational AI isn't a nice-to-have. It's how you prevent the misinformation that creates E&O exposure.

Don't restrict innovation – channel it. Michelle's framework is simple: grab a piece of paper. On one side, write everything that must remain human touch like complex decisions, empathy-driven conversations, and high-stakes client interactions. On the other side, list what's repetitive and could be standardized: proposal generation, sales pitch creation, routine policy questions.

The agencies seeing 40% workload improvements aren't replacing humans. They're redirecting human energy from repetitive tasks to relationship-building.

One insight that surprised Michelle: "The hardest thing for salespeople to do is their sales pitch." AI can generate a first draft of a sales pitch or proposal in 30 seconds, freeing producers to focus on actually selling.

What to do about this:

→ Audit your current AI exposure this week. Survey your team anonymously: What AI tools are you using? For what tasks? You'll likely discover widespread adoption you didn't know existed… and that's your starting point for governance.

→ Create an AI standard operating procedure before Q2. Document what AI tools are approved, what data can never be entered into AI systems, and who owns document accuracy in your knowledge base. Make violations a compliance issue, not a suggestion.

→ Implement the "human touch vs. standardize" exercise with your leadership team. Spend 60 minutes mapping every major workflow. Identify the 3-5 repetitive tasks consuming the most producer time and pilot secure AI solutions for those specific use cases.

Disclaimer

This newsletter is for informational purposes only and summarizes public sources and podcast discussions at a high level. It is not legal, financial, tax, security, or implementation advice, and it does not endorse any product, vendor, or approach. Insurance environments, laws, and technologies change quickly; details may be incomplete or out of date. Always validate requirements, security, data protection, regulatory compliance, and risk implications for your organization, and consult qualified advisors before making decisions or changes. All trademarks and brands are the property of their respective owners.